Do More With Your Events

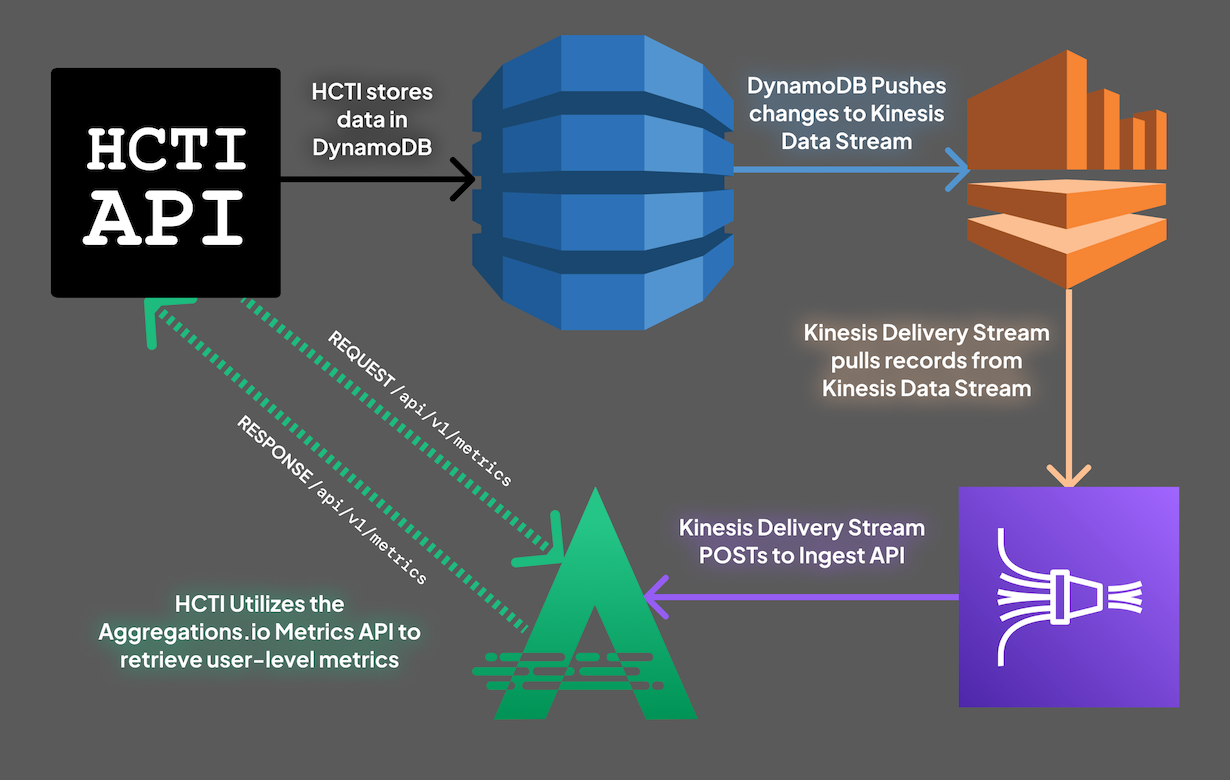

Real Time Metrics + Automated Documentation

using your existing data pipeline.

No SDK or complex setup required.

Real Time Metrics

Analytics events aren't just for BI.

Level up observability and feed real-time data into your product - using your existing events.

- Dashboards & Alerting powered by Grafana

- Continuous re-training of ML models

- High performance customer dashboards

AutoDocs NEW

Supercharge your team with fully documented and searchable event schemas, automatically generated and kept up to date.

- Schemas for ALL your events and properties

- Don't waste time "figuring out" event structures

- Review changes between releases

Why Choose Aggregations.io?

There are many options to choose from when building out your data stack. Here's why you should include us.

Flexibility

Send JSON, we'll make it useful.

We don't expect you to install an SDK or write a transformation layer.

Security

We don't want or need your raw data.

Security & privacy are in our DNA.

Easy

We obsess, so you don't have to.

Data is complicated enough, we won't add yet another layer of complexity.

Simple Pricing

We strive for understandable and predictable pricing. No tiered services, no differentiated features. Just pay for the quantity you need. Everyone gets the same great service.